Hello everybody,

Yes, I know you all wanted to learn about MySQL queries, but I am still preparing the database (don’t worry it’s coming, just taking a while to prepare). And since I did mention I’ll be doing analyses on this blog, that is what I will be doing on this post. It’s basically an expansion of the TV show set from R Lesson 4: Logistic Regression Models & R Lesson 5: Graphing Logistic Regression Models with 3 new variables.

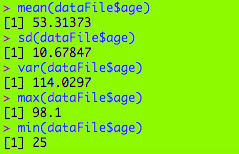

So, as we should always do, let’s load the file into R and get an understanding of our variables, with str(file).

As for the new variables, let’s explain. By the way, the numbers you see for the new variables are dummy variables (remember those?). I thought the dummy variables would be a better way to categorize the variables.

- Rating-a TV show’s parental rating (no not how good it is)

- 1-TV G

- 2-TV PG

- 3-TV 14

- 4-TV MA

- 5-Not applicable

- Usual day of week-the day of the week a show usually airs its new episodes

- 1-Monday

- 2-Tuesday

- 3-Wednesday

- 4-Thursday

- 5-Friday

- 6-Saturday

- 7-Sunday

- 8-Not applicable (either the show airs on a streaming service or airs 5 days a week like a talk show or doesn’t have a consistent airtime)

- Medium-what network the show airs on

- 1-Network TV (CBS, ABC, NBC, FOX or the CW)

- 2-Cable TV (Comedy Central, Bravo, HBO, etc.)

- 3-Streaming TV (Amazon, Hulu, etc.)

I decided to do three logistic regression models (one for each of the new variables). The renewed/cancelled variable (known as X2018.19.renewal.) is still the binary variable, and the other dependent variable I used for the three models is season count (known as X..of.seasons..17.18.).

First, remember to install (and use the library function for) the ggplot2 package. This will come in handy for the graphing portion.

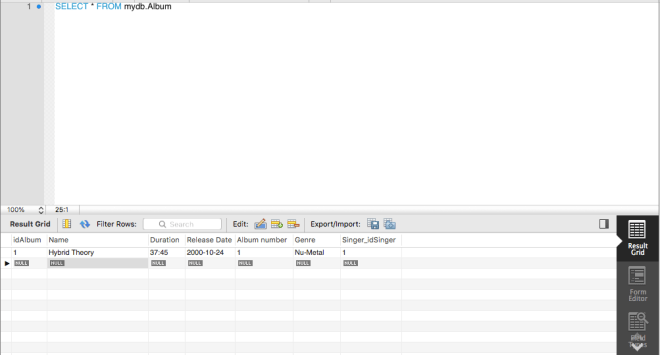

Here’s my first logistic regression model, with my binary variable and two dependent variables (season count and rating). If you’re wondering what the output means, check out R Lesson 4: Logistic Regression Models for a more detailed explanation.

Here are two functions you need to help set up the model. The top function help set up the grid and designate which categorical variable you want to use in your graph. The bottom function helps predict the probabilities of renewal for each show in a certain category. In this case, it would be the rating category (the ones with TV-G, TV-PG, etc.)

![]()

Here’s the ggplot function. Geom_line() creates the lines for each level of your categorical variable; here are 5 lines for the 5 categories.

![]()

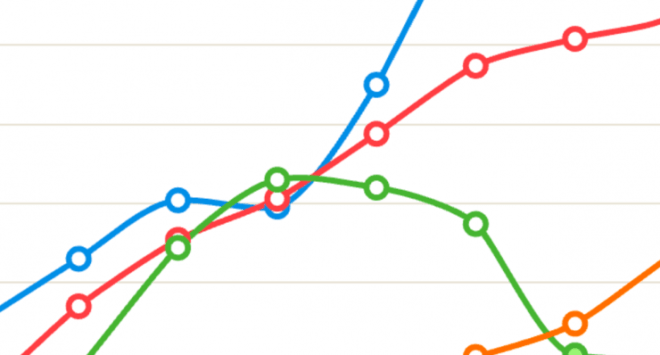

Here’s the graph. As you see, there are five lines, one for each of the ratings. What are some inferences that can be made?

- The TV-G shows (category 1) usually have the lowest chance of renewal. In this model, a TV-G show would need to have run for approximately a minimum of 22 seasons for at least 50% chance of renewal. (Granted, the only TV-G show on this database is Fixer Upper, which was not renewed)

- The TV-PG shows have a slightly better chance at renewal as renewal odds for these shows are at least 25%. To attain at a minimum 50% of renewal, these shows would only need to have run for approximately a minimum of 17 seasons, not 22 (like The Simpsons).

- The TV-14 shows have a minimum 50% chance of renewal, regardless of how many seasons they have run. They would need to have run for at least 25 seasons to attain a minimum 75% chance of renewal, however (SNL would be the only applicable example here, as it was renewed and has run for 43 seasons).

- The TV-MA shows have a minimum 76% (approximately) chance of renewal no matter how many seasons they have aired. Shows like South Park, Archer, Real Time, Big Mouth and Orange is the New Black are all TV-MA, and all of them were renewed.

- The unrated shows had the best chances at renewal, as they had a minimum 92% (approximately) chance at renewal. (Granted, Watch What Happens Live! is the only unrated show on this list)

Next, we repeat the process used to create the plot for the first model for these next two models.

![]()

![]()

What are some inferences that can be made? (I know this graph is hard to read, but we can still make observations from this graph.

- The orange line (representing Tuesday shows) is the lowest on the graph, so this means Tuesday shows usually had the lowest chances of renewal. This makes sense, as Tuesday shows like LA to Vegas, The Mick, and Rosanne were all cancelled.

- On the other end, the pink line (representing shows that either aired on streaming services, did not have a consistent time slot, or aired every day like talk shows) is the highest on the graph, so this means shows without a regular time slot had the best chances at renewal (such as Atypical, Jimmy Kimmel Live!, and House of Cards).

![]()

![]()

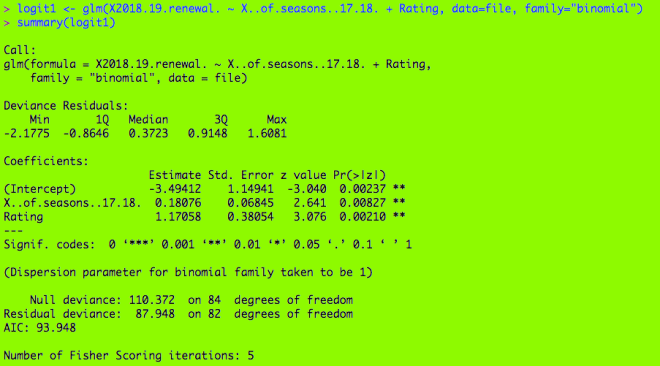

What inferences can we make from this graph?

- The network shows (from the 5 major broadcast networks CBS, ABC, NBC, FOX and the CW) had the lowest chances at renewal. At least 11 seasons would be needed for a minimum 50% chance of renewal.

- Some shows would include The Simpsons (29 seasons), Family Guy (16 seasons), The Big Bang Theory (11 seasons), and NCIS (15 seasons), all of which were renewed.

- The cable shows (from channels such as Comedy Central, HBO, and Bravo) have a minimum 58% (approximately) chance of renewal, but at least 15 seasons would be needed for a minimum 70% chance of renewal.

- Some shows would include South Park (21 seasons) and Real Time (16 seasons), both of which were renewed.

- The streaming shows (from services such as Netflix, Hulu, or CBS All Access) had the best odds for renewal (approximately 76% minimum chance at renewal). At least 30 seasons would be needed for a 90% chance at renewal.

- This doesn’t make any sense yet, as streaming shows have only been around since the early-2010s.

Thanks for reading, and I’ll be sure to have the MySQL database ready so you can start learning about querying.

Michael